Training Deep Neural Networks on a GPU with Tensorflow

This notebook is a tensorflow port of https://jovian.ml/aakashns/04-feedforward-nn

Despite the structural differences in Tensorflow and PyTorch, I have tried to port the torch notebooks to tensorflow, which helps in learning both frameworks along with the course PyTorch: Zero to GANs by Aakash

Part 4 of "Tensorflow: Zero to GANs"

This notebook is the fourth in a series of tutorials on building deep learning models with Tensorflow, an open source neural networks library. Check out the full series:

- Tensorflow Basics: Tensors & Gradients

- Linear Regression & Gradient Descent

- Image Classfication using Logistic Regression

- Training Deep Neural Networks on a GPU

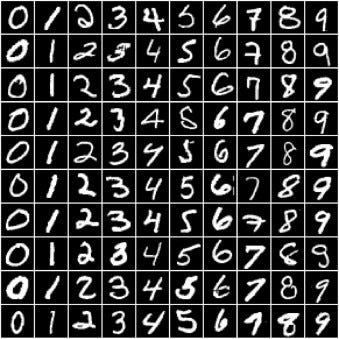

In the previous tutorial, we trained a logistic regression model to identify handwritten digits from the MNIST dataset with an accuracy of around 86%.

However, we also noticed that it's quite difficult to improve the accuracy beyond 87%, due to the limited power of the model. In this post, we'll try to improve upon it using a feedforward neural network.

Preparing the Data

The data preparation is identical to the previous tutorial. We begin by importing the required modules & classes.

import numpy as np

import tensorflow as tf

from tensorflow.keras.models import Sequential

from tensorflow.keras.layers import Dense, Reshape

import tensorflow_datasets as tfdsTo view the device on which operations are performed, uncomment this line and run

# tf.debugging.set_log_device_placement(True)